[ad_1]

With more than 2 billion voters anticipated to go to polls in 50 nations this yr, the trade group Coalition for Content Provenance and Authenticity (C2PA) desires to be main in combating deepfakes by way of the usage of metadata and provenance know-how, which tracks the origins of a picture.

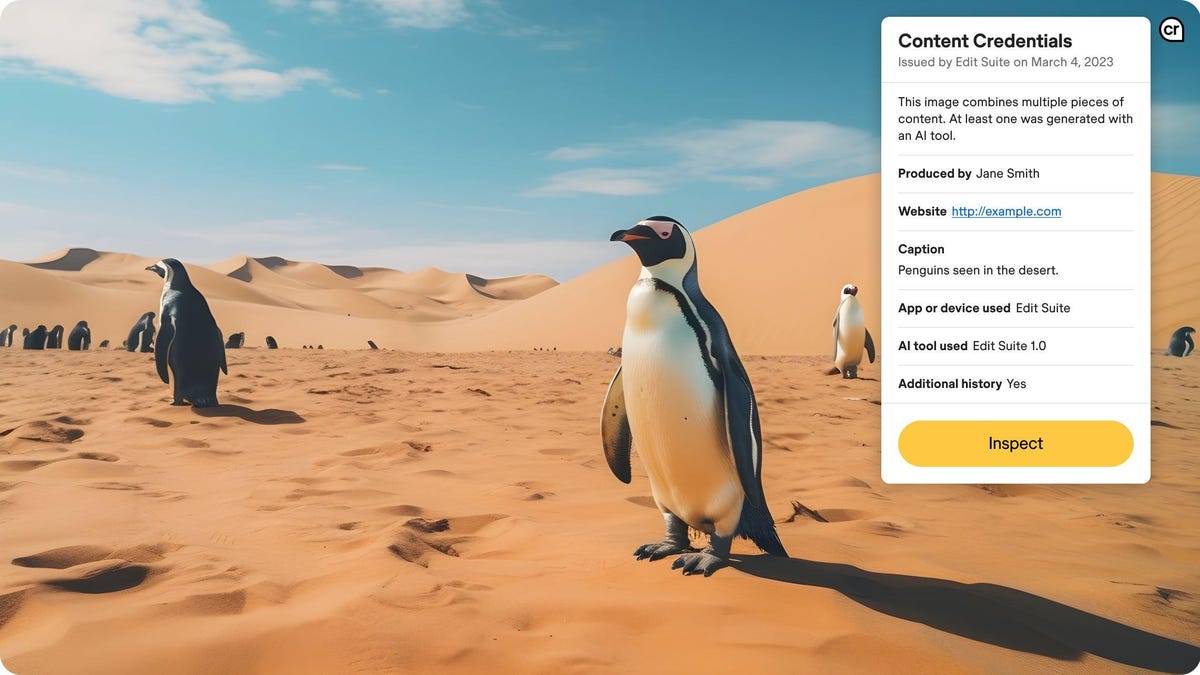

The thought is that AI-generated content material ought to have a label like vitamin labels for meals, the place a client just isn’t prohibited from shopping for a sugary cereal, however can stroll into the shop and know what’s in it and make their very own determination, stated Andy Parsons, senior director at C2PA.

Google introduced Thursday, Feb. 8 that it’s becoming a member of the coalition that consists of members like Meta and Adobe, which the trade hopes will sign to others the significance of labeling AI-made content material. The aim of the group, which has 100 members now and “a variety of curiosity” is to get all media with content material credentials.

“For those who’re in information consumption mode, it’s best to count on that your entire photos, pictures, packages of textual content, video, and so on, would have content material credentials proving that one thing comes from the place it purports to be,” he stated. “That’s how you understand the lock icon [seen with HTTPS on the URL] in your browser began out. Initially, it was the exception. And now it’s so frequent and anticipated that you just not have a lock icon.” By doing so, that would cut back the potential of dangerous actors.

How tech firms are combating pretend photos by dangerous actors

There’s nobody approach to fight deepfakes. Tech firms have eagerly been ahead of regulators on the subject of discovering options, whether or not that’s by way of disclosures or watermarks. It makes a variety of sense; with elections being so public, they need to ensure they’ve a very good public picture.

In 2019, the coalition, which was began by Adobe, was born out of the thought of making a strong form of content material provenance that connects the “components and recipe” to the content material. Round that point, a video depicting Nancy Pelosi slurring her phrases and sounding intoxicated, which was developed using machine-learning algorithms, was circling on-line, which impressed them to consider marking when one thing was AI generated or not. In 2021, Adobe, along with firms like Microsoft and the BBC, created open-source implementations geared toward doing so.

So, how does it work? The CTA commonplace is open-sourced tamper proof, so that is details about what software, equivalent to AI or a digicam, made one thing, which is successfully hooked up to a picture. The know-how is open-sourced, too. If the metadata was deliberately stripped off, it should nonetheless be clear within the historical past and may be recovered by way of the cloud. On the visible entrance, shoppers see a “CR” image on the picture which they will click on to see the “components.”

However, there’s no onerous rule on firms adopting these labels. In 2023, Adobe, which initiated the group, rolled out its generative AI mannequin Firefly, which robotically attaches C2PA’s Content material Credentials to any picture technology from the AI-driven Photoshop. Microsoft, after, launched the usage of Content material Credentials to label all AI-generated photos created by way of Bing Picture Creator. OpenAI additionally, this week, simply announced images generated in ChatGPT embody metadata utilizing C2PA specs.

Google doesn’t have a coverage but on how it’s adopting AI-labels with its photos. The corporate additionally notes that there are a number of methods to fight pretend photos, together with embedding digital watermarks into AI-generated photos and audio, political advert disclosures, and YouTube content material labels, requiring creators to mark when movies are altered or artificial.

What’s subsequent

C2PA says it’s seeing adoption from digicam makers like Sony and Nikon, that are producing cameras that offer authentication technology. Parsons stated he want to see cellular machine producers be a part of the coalition and start to supply AI labels as a part of telephones, that are then usable by shoppers.

Provided that generative AI additionally produces totally different modes of content material equivalent to video, picture, and audio, the coalition can also be taking a look at analysis on what creating an AI label for audio appears to be like like.

[ad_2]

Source link